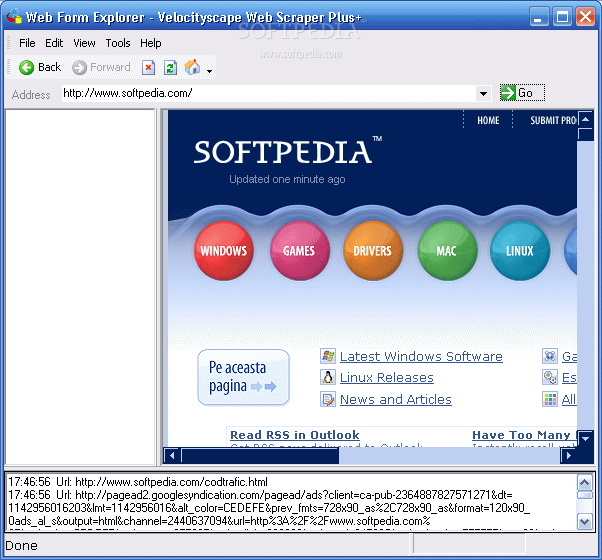

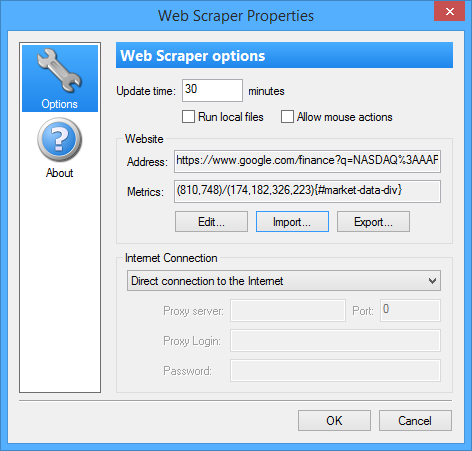

Especially, because selenium isn't the most intuitive piece of software either. My main argument was that OP obviously isn't too familiar with selenium either, and in this case I'd recommend they rather invest their time learning scrapy than selenium (which we agree on isn't really the best tool for the job). (Unless, you regularly need to write web crawlers, then learning a dedicated library would still be preferable imho) If you already know selenium and it's enough for what you want (in terms of speed, maintainability etc.), using it rather than learning something new would definitely be preferable, I agree. Can you write a web crawler using selenium? Sure, but the final product will be a lot slower and it will take a lot more time and effort to write. If we talk about web crawling, selenium is a shovel. In short, it was written to provide all the tools you need to make writing web scrapers as comfortable and easy as possible. It has functions for pagination, it supports callbacks for using different parsers for different sub sites, it provides link extractors to find and follow urls, asynchronous request handling, logging, automatic request throttling, file exports for your results and many more. What you want to use instead, is a web crawling framework like scrapy, which provides methods and classes to deal with all the common web scraping requirements. It's meant for web application testing and automation not for scraping/crawling. I have no idea why, but selenium must be one of the most misused libraries among new python programmers. Also, selenium is not a web scraping library.

The website you want to scrape doesn't even use dynamically loaded content which makes selenium totally unnecessary to begin with. Print(url) #Returns all the urls from pages 1-9. Here's what I have so far: from selenium import webdriverįrom import Byįrom import OptionsĬhrome_options.add_argument("-headless") #Use headless browser since we're opening several urls (one per city where Allied REIT has properties)ĭRIVER_PATH = 'C:\Program Files (x86)\chromedriver' #add chromedriver to local pathĭriver = webdriver.Chrome(executable_path=DRIVER_PATH, chrome_options=chrome_options)Ĭontainers = driver.find_elements(By.CSS_SELECTOR, 'div a') #links to each property I can get the lat/long data from individual pages, and I can get the hrefs from page 1 that go into each property's page, but I'm having difficulty iterating through all 9 pages and extracting the data. From the main page, you can click on any one property and when doing Inspect, you can see the information under 'data-lat' and 'data-lng'. There're latitude/longitude data that I'm trying to get from several different pages.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed